If low-order feature interactions shouldīe learnt, please use FactorizationMachine module instead, which will share Instead, it covers only the deep component of the publication.

This module does not cover the end-end functionality of the published paper. The following modules are based off the Deep Factorization-Machine (DeepFM) paperĬlass DeepFM implents the DeepFM FrameworkĬlass FactorizationMachine implements FM as noted in the above paper.Ĭlass. randn ( batch_size, in_features ) dcn = VectorCrossNet ( num_layers = num_layers ) output = dcn ( input ) forward ( input : Tensor ) → Tensor ¶ Parameters : On each layer l, the tensor is transformed into:īatch_size = 3 num_layers = 2 in_features = 10 input = torch. This module leverages such \(K\) experts each learning feature interactions inĭifferent subspaces, and adaptively combining the learned crosses using a gating LowRankCrossNet, instead of relying on one single expert to learn feature crosses, Low-rank matrix \((N*r)\) together with mixture of experts. LowRankMixtureCrossNet defines the learnable crossing parameter per layer as a Low Rank Mixture Cross Net is a DCN V2 implementation from the paper: LowRankMixtureCrossNet ( in_features: int, num_layers: int, num_experts: int = 1, low_rank: int = 1, activation: ~typing.Union, ~torch.Tensor]] = ) ¶ Input ( torch.Tensor) – tensor with shape. randn ( batch_size, in_features ) dcn = LowRankCrossNet ( num_layers = num_layers, low_rank = 3 ) output = dcn ( input ) forward ( input : Tensor ) → Tensor ¶ Parameters :

Input_dim= will do the layer normalization on last two dimensions.ĭevice ( Optional ) – default compute device.īatch_size = 3 num_layers = 2 in_features = 10 input = torch. Input_dims ( Union, torch.Size ]) – dimensions to normalize over. SwishLayerNorm ( input_dims : Union, Size ], device : Optional = None ) ¶Īpplies the Swish function with layer normalization: Y = X * Sigmoid(LayerNorm(X)). These modules include:Įxtensions of nn.Embedding and nn.EmbeddingBag, called EmbeddingBagCollectionĬommon module patterns such as MLP and SwishLayerNorm.Ĭustom modules for TorchRec such as PositionWeightedModule andĮmbeddingTower and EmbeddingTowerCollection, logical “tower” of embeddingsĪctivation Modules class. Pick up my sci-fi novels the Herokiller series and The Earthborn Trilogy.The torchrec modules contain a collection of various modules. Subscribe to my free weekly content round-up newsletter, God Rolls. It’s not quite clear what the API had to do with the flood of dungeon errors on Friday, but let’s hope this is not going to be a regular thing.įollow me on Twitter, YouTube, Facebook and Instagram.

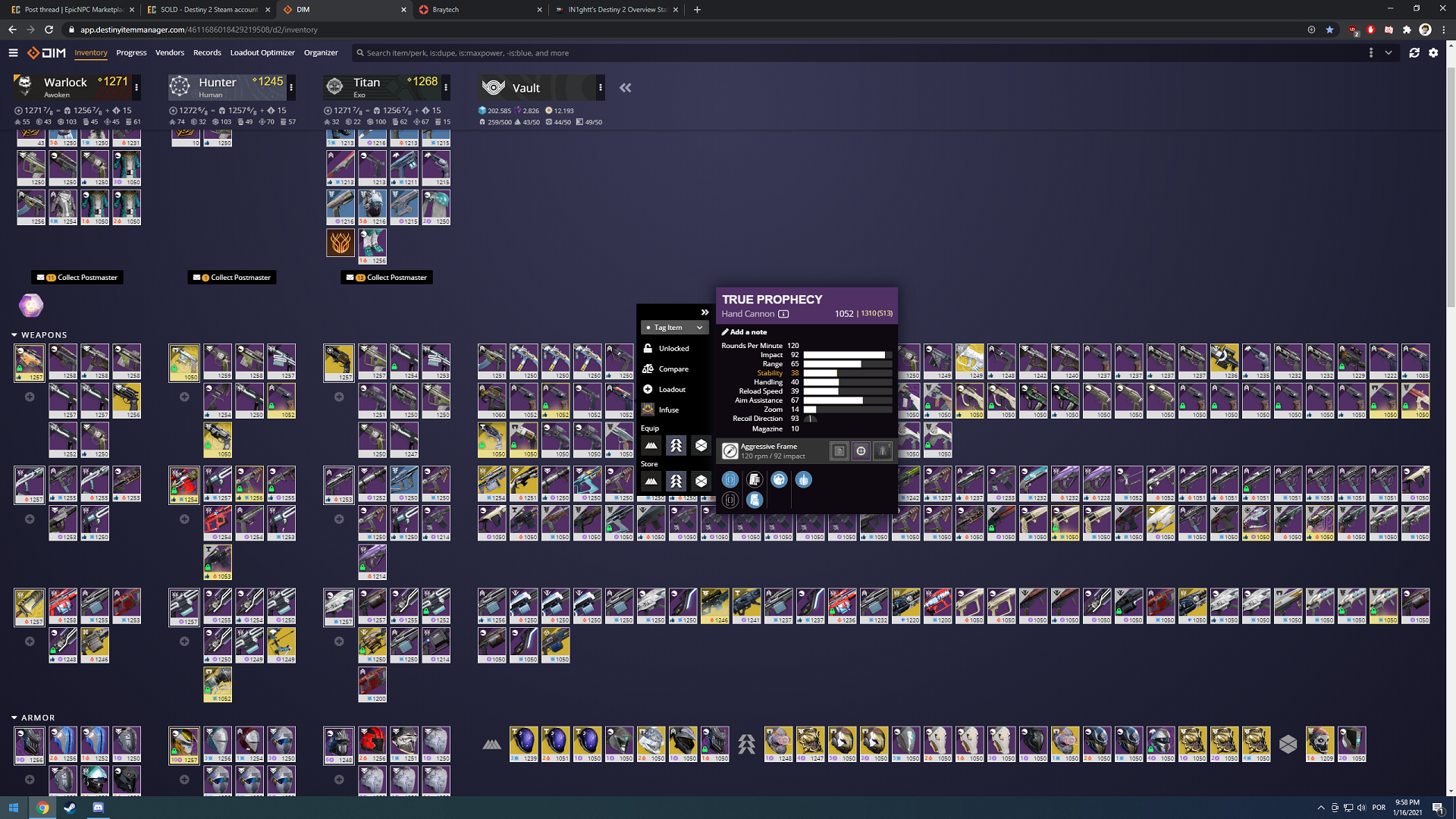

This current API situation should hopefully be resolved by tomorrow. But DIM will remain essential too, I’m guessing. Some of this could get better with the upcoming addition of loadouts, also arriving next year, as it seems like that would be able to pull gear from anywhere to assemble a specific loadout, when activated. Gear management is a nightmare, and I do hope Bungie continues to try to fix some of these issues with in-game upgrades rather than just relying on DIM until the end of time. Bungie will introduce an in-game LFG tool next year after Lightfall, but it’s clear things like Light.gg and DIM will remain essential to the game for a long while to come, and the game is almost unplayable without them. It’s pretty wild to realize just how much of Destiny 2 relies on the API and the third party services that players have built for the game.

0 Comments

Leave a Reply. |

Details

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed